Tags

Abatement Cost, Carbon Abatement, Carbon Forcings, Carbon Limits, Charles Hooper, Climate models, Cloud Formation, Confidence Interval, David Henderson, Earth Day, Measurement Error, Natural Climate Variation, Solar Forcings, Statistical Precision, Surface Temperatures, Temperature Aggregation, William Nordhaus

Last week I mentioned some of the inherent upward biases in the earth’s more recent surface temperature record. Measuring a “global” air temperature at the surface is an enormously complex task, requiring the aggregation of measurements taken using different methods and instruments (land stations, buoys, water buckets, ship water intakes, different kinds of thermometers) at points that are unevenly distributed across latitudes, longitudes, altitudes, and environments (sea, forest, mountain, and urban). Those measurements must be extrapolated to surrounding areas that are usually large and environmentally diverse. The task is made all the more difficult by the changing representation of measurements taken at these points, and changes in the environments at those points over time (e.g., urbanization). The spatial distribution of reports may change systematically and unsystematically with the time of day (especially onboard ships at sea).

Last week I mentioned some of the inherent upward biases in the earth’s more recent surface temperature record. Measuring a “global” air temperature at the surface is an enormously complex task, requiring the aggregation of measurements taken using different methods and instruments (land stations, buoys, water buckets, ship water intakes, different kinds of thermometers) at points that are unevenly distributed across latitudes, longitudes, altitudes, and environments (sea, forest, mountain, and urban). Those measurements must be extrapolated to surrounding areas that are usually large and environmentally diverse. The task is made all the more difficult by the changing representation of measurements taken at these points, and changes in the environments at those points over time (e.g., urbanization). The spatial distribution of reports may change systematically and unsystematically with the time of day (especially onboard ships at sea).

The precision with which anything can be measured depends on the instrument used. Beyond that, there is often natural variation in the thing being measured. Some thermometers are better than others, and the quality of these instruments has varied tremendously over the roughly 165-year history of recorded land temperatures. The temperature itself at any location is subject to variation as the air shifts, but temperature readings are like snapshots taken at points in time, and may not be representative of areas nearby. In fact, the number of land weather stations used in constructing global temperatures has declined drastically since the 1970s, which implies an increasing error in approximating temperatures within each expanding area of coverage.

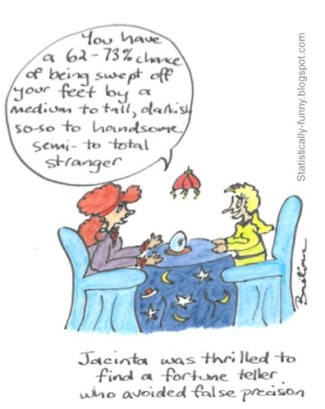

The point is that a statistical range of variation exists around each temperature measurement, and there is additional error introduced by vagaries of the aggregation process. David Henderson and Charles Hooper discuss the handling of temperature measurement errors in aggregation and in discussions of climate change. The upward trend in the “global” surface temperature between 1856 and 2004 was about 0.8° C, but a 95% confidence interval around that change is ±0.98° C. (I believe that is probably small given the sketchiness of the early records.) In other words, from a statistical perspective, one cannot reject the hypothesis that the global surface temperature was unchanged for the full period.

Henderson and Hooper make some other salient points related to the negligible energy impulse from carbon forcings relative to the massive impact of variations in solar energy and the uncertainty around the behavior of cloud formation. It’s little wonder that climate models relying on a carbon-forcing impact have erred so widely and consistently.

In addition to reinforcing the difficulty of measuring surface temperatures and modeling the climate, the implication of the Henderson and Hooper article is that policy should not be guided by measurements and models subject to so much uncertainty and such minor impulses or “signals”. The sheer cost of abating carbon emissions is huge, though some alternative means of doing so are better than others. Costs increase as the degree of abatement increases (or replacement of low-carbon alternatives), and I suspect that the incremental benefit decreases. Strict limits on carbon emissions reduce economic output. On a broad scale, that would impose a sacrifice of economic development and incomes in the non-industrialized world, not to mention low-income minorities in the developed world. One well-known estimate by William Nordhaus involved a 90% reduction in world carbon emissions by 2050. He calculated a total long-run cost of between $17 trillion and $22 trillion. Annually, the cost was about 3.5% of world GDP. The climate model Nordhaus used suggested that the reduction in global temperatures would be between 1.3º and 1.6º C, but in view of the foregoing, that range is highly speculative and likely to be an extreme exaggeration. And note the small width of the “confidence interval”. That range is not at all a confidence interval in the usual sense; it is a “stab” at the uncertainty in a forecast of something many years hence. Nordhaus could not possibly have considered all sources of uncertainty in arriving at that range of temperature change, least of all the errors in measuring global temperature to begin with.

Climate change activists would do well to spend their Earth Day educating themselves about the facts of surface temperature measurement. Their usual prescription is to extract resources and coercively deny future economic gains in exchange for steps that might or might not solve a problem they insist is severe. The realities are that the “global temperature” is itself subject to great uncertainty, and its long-term trend over the historical record cannot be distinguished statistically from zero. In terms of impacting the climate, natural forces are much more powerful than carbon forcings. And the models on which activists depend are so rudimentary, and so error prone and biased historically, that taking your money to solve the problem implied by their forecasts is utter foolishness.

Pingback: Certainty Laundering and Fake Science News | Sacred Cow Chips

Pingback: Lords of the Planetary Commons Insist We Banish Sovereignty, Growth | Sacred Cow Chips