Tags

Absolute Advantage, Comparative advantage, DeepMind, Elon Musk, Eric Schmidt, Facebook, Gigafactory, Google, Mark Zuckerberg, OpenAI, rent seeking, Ronald Bailey, SpaceX, Tesla

Elon Musk says we should be very scared of artificial intelligence (AI). He believes it poses an “existential risk” to humanity and calls for “proactive regulation” of AI to limit its destructive potential. His argument encompasses “killer robots”: “A.I. & The Art of Machine War” is a good read and is consistent with Musk’s message. Military applications already involve autonomous machine decisions to terminate human life, but the Pentagon is weighing whether decisions to kill should be made only by humans. Musk also focuses on more subtle threats from machine intelligence: It could be used to disrupt power and communication systems, to manipulate human opinion in dangerous ways, and even to sow panic via cascades of “fake robot news”, leading to a breakdown in civil order. Musk has also expressed a fear that AI could have disastrous consequences in commercial applications with runaway competition for resources. He sounds like a businessmen who really dislikes competition! After all, market competition is self-regulating and self-limiting. The most “destructive” effects occur only when competitors come crying to the state for relief!

Several prominent tech leaders and AI experts have disputed Musk’s pessimistic view of AI, including Mark Zuckerberg of Facebook and Eric Schmidt, chairman of Google’s parent company, Alphabet, Inc. Schmidt says:

“My question to you is: don’t you think the humans would notice this, and start turning off the computers? We’d have a race between humans turning off computers, and the AI relocating itself to other computers, in this mad race to the last computer, and we can’t turn it off, and that’s a movie. It’s a movie. The state of the earth currently does not support any of these scenarios.“

Along those lines, Google’s AI lab known as “DeepMind” has developed an AI off-switch, otherwise known as the “big red button“. Obviously, this is based on human supervision of AI processes and on ensuring the interruptibility of AI processes.

Another obvious point is that AI, ideally, would operate under an explicit objective function(s). This is the machine’s “reward system”, as it were. Could that reward system always be linked to human intent? To a highly likely non-negative human assessment of outcomes? Improved well-being? That’s not straightforward in a world of uncertainty, but it is at least clear that a relatively high probability of harm to humans should impose a large negative effect on any intelligent machine’s objective function.

Those kinds of steps can be regarded as regulatory recommendations, which is what Musk has advocated. Musk has outlined a role for regulators as gatekeepers who would review and ensure the safety of any new AI application. Ronald Bailey reveals the big problem with this approach:

“This may sound reasonable. But Musk is, perhaps unknowingly, recommending that AI researchers be saddled with the precautionary principle. According to one definition, that’s ‘the precept that an action should not be taken if the consequences are uncertain and potentially dangerous.’ Or as I have summarized it: ‘Never do anything for the first time.’“

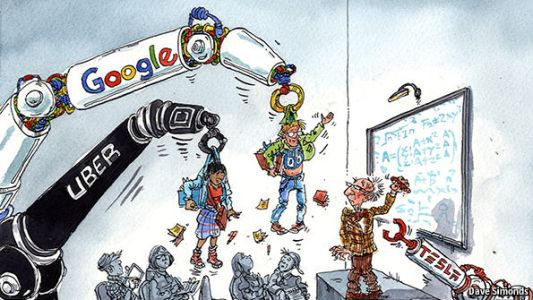

Regulation is the enemy of innovation, and there are many ways in which current and future AI applications can improve human welfare. Musk knows this. He is the consummate innovator and big thinker, but he is also skilled at leveraging the power of government to bring his ideas to fruition. All of his major initiatives, from Tesla to SpaceX, to Hyperloop, battery technology and solar roofing material, have gained viability via subsidies.

But another hallmark of crony capitalists is a willingness to use regulation to their advantage. Could proposed regulation be part of a hidden agenda for Musk? For example, what does Musk mean when he says, “There’s only one AI company that worries me” in the context of dangerous AI? His own company(ies)? Or another? One he does not own?

Musk’s startup OpenAI is a non-profit engaged in developing open-source AI technology. Musk and his partners in this venture argue that widespread, free availability of AI code and applications would prevent malicious use of AI. Musk knows that his companies can use AI to good effect as well as anyone. And he also knows that open-source AI can neutralize potential advantages for competitors like Google and Facebook. Perhaps he hopes that his first-mover advantage in many new industries will lead to entrenched market positions just in time for the AI regulatory agenda to stifle competitive innovation within his business space, providing him with ongoing rents. Well played, cronyman!

Any threat that AI will have catastrophic consequences for humanity is way down the road, if ever. In the meantime, there are multiple efforts underway within the machine learning community (which is not large) to prevent or at least mitigate potential dangers from AI. This is taking place independent of any government action, and so it should remain. That will help to maximize the potential for beneficial innovation.

Musk also asserts that robots will someday be able to do “everything better than us”, thus threatening the ability of the private sector to provide income to individuals across a broad range of society. This is not at all realistic. There are many detailed and nuanced tasks to which robots will not be able to attend without human collaboration. Creativity and the “human touch” will always have value and will always compete in input markets. Even if robots can do everything better than humans someday, an absolute advantage is not determinative. Those who use robot-intensive production process will still find it advantageous to use labor, or to trade with those utilizing more labor-intensive production processes. Such are the proven outcomes of the law of comparative advantage.

Pingback: The Comparative Human Advantage | Sacred Cow Chips