Tags

ARGO Buoys, Atmospheric CO2, Christopher Essex, Extensive Measure, Global Temperature, GMST, Intensive Measure, IPCC, Jack Salmon, Jonathan Cohler, NOAH, Ocean Acidification, PH, Price Level, Satellite Temperatures, Sea Surface Temperatures, Temperature Averaging, Urban Heat Island Effect, Weather Station Siting

At the heart of the climate crisis narrative lies a huge weakness regarding a thing its believers take for granted: whether our measures of global temperature are meaningful, let alone reliable. The problems are both at the level of individual weather stations, their siting, their geographical distribution, and perhaps even more critically, their aggregation into the so-called Global Mean Surface Temperature (GMST).

The Weather Station Network

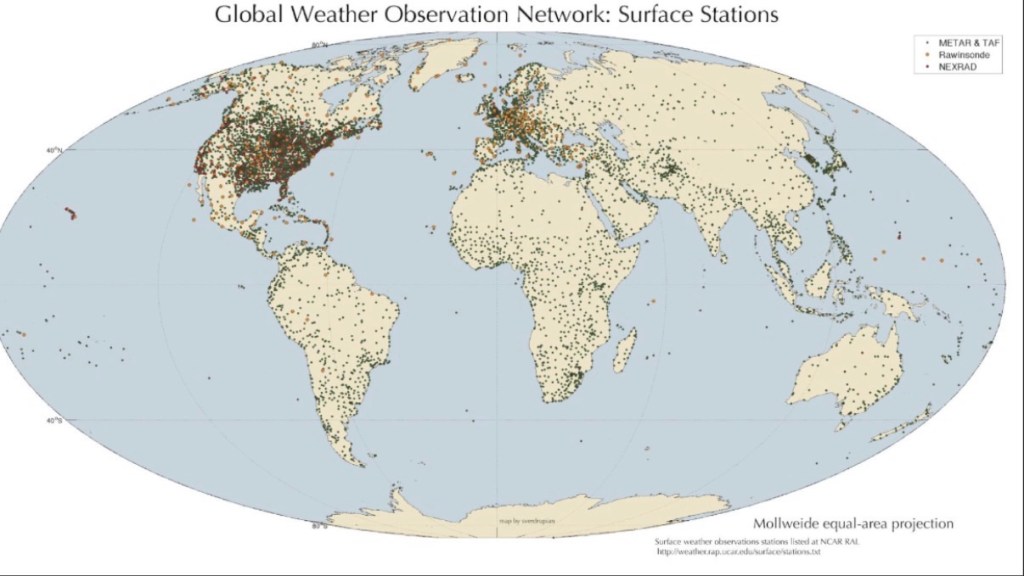

In the U.S. and worldwide we have about one weather station for every one thousand square miles. However, the geographic distribution of weather stations is highly uneven (see the map of land-based stations above) and is more sparse in rural areas than in urban environments. It’s also very sparse in highly remote and extreme environments.

At best, a temperature reading at a particular weather station might be approximately representative of its surrounding area at that moment. However, temperatures from place-to-place are influenced by many varying features of local geography. That includes altitude, the presence of waterways and bodies of water, other surface features such as rock and greenery, and human land use. Thus, conditions at a given weather station might not be at all descriptive of the surrounding area.

Moreover, there are no well-defined geographic “zones” to which weather stations are assigned. Attempts to do so involve arbitrary and irregular boundaries and drastic variations in size. “Averaging” temperatures across such zones requires a crude attempt to assign weights based on distances and ultimately yields mongrelized statistics. Furthermore, daily temperature averages are based on averages of high and low temperatures at each station. Such an average might only describe the actual temperature at a station for an instant, but regardless of duration, the timing is likely to differ across any two stations. Not only that, but many weather stations do not record “daily” temperatures based on normal calendar days. Thus, temperature averages across stations are calculated across locations, extremes only, and time. And again, inputs of temperatures from the individual stations are not representative of their respective zones.

Deterioration in the quality of weather station sites has been the subject of sharp criticism over the years. There are now a large number of poorly-sited stations, often located in close proximity to paved surfaces, concrete, metals, or exhaust fans. These kinds of features impart an upward bias to the local temperature record. Individually, these are small examples of the well-known urban heat island effect. In the aggregate, it creates a substantial exaggeration in temperatures, accounting for about 50% of the estimated warming trend for the U.S.

According to this study, the upward bias is more severe for poorly-sited stations, and the quality of siting often deteriorates over time as urban growth encroaches on outlying communities. Urban sites tend to warm the most, followed by semi-urban sites, followed by rural sites. Even worse, the study found that the NOAH temperature adjustment process creates a contagion of the warming bias, passing biases from poor sites along to better stations as an artifact. That is, the process adjusts temperatures upward for well-sited stations to more closely match poor sites!

Ocean temperatures present their own challenges. Several different techniques have been used over the years, but the most consistent and reliable ocean temperatures are from so-called ARGO buoys, which have been available only since 2003. Before that, ocean temperatures were taken using buckets dipped into the water from the sides of ships, and from engine water intakes. Unfortunately, error rates on reported observations from ARGO buoys (which involve several factors besides the accuracy of the thermometers themselves, such as transmission errors) are unknown, but they appear to be far outside acceptable limits. Thus, reasonably good sea surface records have only recently contributed to global temperature coverage, and even those are subject to great uncertainty. (Satellite temperature measurements, by the way, are really indirect estimates of temperatures based on radiance and subsequent calibrations.)

Thus, historical temperature records are an amalgam of different measurement instruments at different locations at different times of the day, adding layers of inconsistency to the calculation of temperature averages.

Physically Untethered

I was prompted to write this post after reading a mathematical analysis of the impossibility of aggregating temperature readings across multiple weather stations in any meaningful way. The analysis, by Jonathan Cohler, is a damning indictment of GMST as a concept. It relies on a series of calculations and transformations that are arbitrarily chosen from many unsuitable alternatives. Cohler says that such an “average temperature” calculation is necessarily “untethered” from the various states of nature it attempts to summarize.

Temperature itself is a so-called intensive quantity. That means it is independent of the size of the system it characterizes. If you combine it with an identical twin system, the temperature of the combined whole doesn’t double, unlike measures like mass or volume. The latter are examples of extensive quantities.

Temperatures vary from one spot to another within a given system while in disequilibrium, and of course they vary over the course of any day. However, the validity of a temperature measurement at a particular location and time requires a local state of equilibrium in the immediate vicinity of the measuring instrument. Otherwise, a temperature measurement is would not be a valid descriptor of the condition of the (very local) system.

Faulty Aggregations

With that in mind, imagine the many arbitrary ways we can devise to aggregate temperatures across weather stations for which conditions differ drastically. These are all attempts to calculate a single temperature for a large and geographically uneven system in a continuing state of disequilibrium. And every combination of weather station temperatures represents an artificially combined “system” in a state of disequilibrium. That’s true of any two adjacent weather stations or of all the weather stations on the globe. No one method of doing so can claim validity as a measure of system-wide temperature. This contrasts with extensive quantities, for which well-defined rules of aggregation exist (e.g., summation) regardless of a system’s dynamic condition.

Over time, the temperature records involve a changing number of stations, local environmental conditions, accuracy, and a varying mix of seawater bucket measurements, ship engine water intake measurements, and ARGO floats. These disparities reinforce the impossibility of measuring wide-ranging “average” trends in temperature.

As Cohler demonstrates mathematically, these temperature averages are physically meaningless. He offers a crazy-sounding example of blending two intensive measurements: averaging the PH of your morning coffee with the PH of seawater at a nearby coast. This is very much of a kind with averaging temperatures across weather stations under disparate conditions. Furthermore, as noted above, the steps employed to arrive at the temperature to be used for each station, and the weight each station is assigned in the average, is hardly a unique set of calculations. There is an infinite number of equally invalid aggregations of the same data.

Grand Ambiguity

Cohler is not the first to point out that the concept of a global temperature average is physically meaningless. In 2007, a paper by Christopher Essex, et al was entitled, “Does a Global Temperature Exist?” The abstract states (my brackets):

“Distinct and equally valid [or invalid] statistical rules can and do show opposite trends when applied to the results of computations from physical models and real data in the atmosphere. A given temperature field can be interpreted as both ‘warming’ and ‘cooling’ simultaneously, making the concept of warming in the context of the issue of global warming physically ill-posed.”

This is all the more salient in a world with warming biases at poorly sited weather stations and a strong urban heat island effect.

My Glass House?

Of course, there are other areas in which similar statistical “sins” are common, some of which are also used repeatedly by climate alarmists: ocean water PH, which Cohler explains cannot be averaged across “parcels”. The result is meaningless. If that isn’t enough for you to harbor doubts about the ocean acidification narrative, just read the first few paragraphs of the tweet linked above!

Similar examples occur in the world of economic data. For example, prices are intensive measures, but economists often refer to an aggregate “price level”. Can such a thing truly exist? Simply averaging prices of all goods and services creates a meaningless figure. Each price can be weighted in a variety of ways (e.g., by shares of a fixed or varying “market basket”). There are several prominent alternatives, all of which have strengths and weaknesses, but none has a claim as an accurate measure of “the price level.”

In fact, though economists talk about it constantly, it can be said that “the price level” does not exist as an objective reality, just as there is no “global temperature.” The difference is that economists readily acknowledge this fundamental ambiguity surrounding price aggregation. Some even insist, for example, that only nominal aggregates (e.g., total spending = prices x quantities), rather than inflation in “the price level”, be considered in certain policy domains, though there is more than one reason for that preference. In contrast, climate officialdom, within the likes of such organizations as the IPCC and NOAH, are loath to acknowledge weaknesses in GMST.

Conclusion

There are many reasons to question the climate orthodoxy, which holds that human emissions of carbon dioxide, a trace gas, produce a warming global temperature trend. An issue that’s been largely taken for granted is the integrity of the so-called global temperature, most commonly the GMST. The reality is that it’s impossible to identify a unique method of calculating a global temperature. It’s possible to specify many different aggregations of local temperature readings, but there is no “true” way of measuring global temperature. Another way of putting this is that it’s impossible to define a single global temperature as a physical reality. There is no such thing.

Nevertheless, global temperature is a critical pillar on which climate alarmism rests, and Cohler has published equally damning critiques of several other climate measurements (also see here), such as mean ocean PH, ocean heat content, and human contribution to atmospheric CO2. Climate authorities should acknowledge the inherent weakness of relying on temperature aggregations, and especially any one aggregation. Perhaps they could define several alternatives, as economists have with price indices, acknowledging the impossibility of pinning down a true global temperature.

The real lesson here is that we should approach climate statistics both with skepticism and humility. Even if you must pretend that it exists, any measure of a so-called global temperature and its trend is of highly of uncertain value. This is critical when it comes to assessing climate policy. As Jack Salmon says in a somewhat broader context:

“One of the most striking features of modern climate economics is not consensus, it’s dispersion. Depending on which paper, model, or administration you consult, the economic damages from climate change range from modest to catastrophic.“