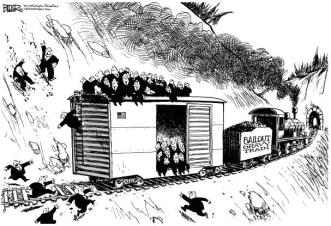

City leaders in St. Louis and Kansas City are the latest to fantasize that market manipulation can serve as a pathway to “economic justice”. They want to raise the local minimum wage to $15 by 2020, following similar actions in Los Angeles, Oakland and Seattle. They will harm the lowest-skilled workers in these cities, not to mention local businesses, their own local economies and their own city budgets. Like many populists on the national level with a challenged understanding of market forces (such as Robert Reich), these politicians won’t recognize the evidence when it comes in. If they do, they won’t find it politically expedient to own up to it. A more cynical view is that the hike’s gradual phase-in may be a deliberate attempt to conceal its negative consequences.

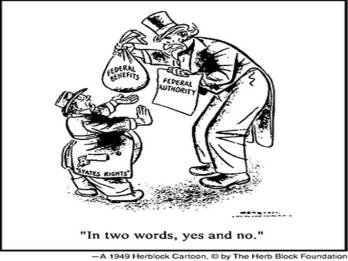

There are many reasons to oppose a higher minimum wage, or any minimum wage for that matter. Prices (including wages) are rich with information about demand conditions and scarcity. They provide signals for owners and users of resources that guide them toward the best decisions. Price controls, such as a wage floor like the minimum wage, short-circuit those signals and are notorious for their disastrous unintended (but very predictable) consequences. Steve Chapman at Reason Magazine discusses the mechanics of such distortions here.

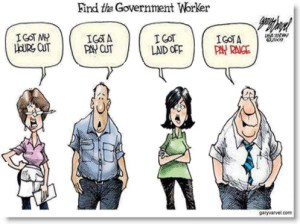

Supporters of a higher minimum wage usually fail to recognize the relationship between wages and worker productivity. That connection is why the imposition of a wage floor leads to a surplus of low-skilled labor. Those with the least skills and experience are the most likely to lose their jobs, work fewer hours or not be hired. In another Reason article, Brian Doherty explains that this is a thorny problem for charities providing transitional employment to workers with low-skills or employability. He also notes the following:

“All sorts of jobs have elements of learning or training, especially at the entry level. Merely having a job at all can have value down the line worth enormously more than the wage you are currently earning in terms of a proven track record of reliable employability or moving up within a particular organization.“

The negative employment effects of a higher wage floor are greater if the employer cannot easily pass higher costs along to customers. That’s why firms in highly competitive markets (and their workers) are more vulnerable. This detriment is all the worse when a higher wage floor is imposed within a single jurisdiction, such as the city of St. Louis. Bordering municipalities stand to benefit from the distorted wage levels in the city, but the net effect will be worse than a wash for the region, as adjustments to the new, artificial conditions are not costless. Again, it is likely that the least capable workers and least resourceful firms will be harmed the most.

The negative effects of a higher wage floor are also greater when substitutes for low-skilled labor are available. Here is a video on the robot solution for fast food order-taking. In fact, today there are robots capable of preparing meals, mopping floors, and performing a variety of other menial tasks. Alternatively, more experienced workers may be asked to perform more menial tasks or work longer hours. Either way, the employer takes a hit. Ultimately, the best alternative for some firms will be to close.

The impact of the higher minimum on the wage rates of more skilled workers is likely to be muted. A correspondent of mine mentioned the consequences of wage compression. From the link:

“In some cases, compression (or inequity) increases the risk of a fight or flee phenomonon [sic]–disgruntlement culminating in union organizing campaigns or, in the case of flee, higher turnover as the result of employees quitting. … all too often, companies are forced to address the problem by adjusting their entire compensation systems–usually upward and across-the-board. .. While wage adjustments may sound good for those who do not have to worry about profits and losses, the real impact for a company typically means it must either increase productivity or lay people off.“

For those who doubt the impact of the minimum wage hike on employment decisions, consider this calculation by Mark Perry:

“The pending 67% minimum wage hike in LA (from $9 to $15 per hour by 2020), which is the same as a $6 per hour tax (or $12,480 annual tax per full-time employee and more like $13,500 per year with increased employer payroll taxes…)….“

Don Boudreaux offers another interesting perspective, asking whether a change in the way the minimum wage is enforced might influence opinion:

“... if these policies were enforced by police officers monitoring workers and fining those workers who agreed to work at hourly wages below the legislated minimum – would you still support minimum wages?“

Proponents of a higher minimum wage often cite a study from 1994 by David Card and Alan Krueger purporting to show that a higher minimum wage in New Jersey actually increased employment in the fast food industry. Tim Worstall at Forbes discussed a severe shortcoming of the Card/Krueger study (HT: Don Boudreaux): Card and Krueger failed to include more labor-intensive independent operators in their analysis, instead focusing exclusively on employment at fast-food chain franchises. The latter were likely to benefit from the failure of independent competitors.

Another common argument put forward by supporters of higher minimum wages is that economic theory predicts positive employment effects if employers have monopsony power in hiring labor, or power to influence the market wage. This is a stretch: it describes labor market conditions in very few localities. Of course, any employer in an unregulated market is free to offer noncompetitive wages, but they will suffer the consequences of taking less skilled and less experienced hires, higher labor turnover and ultimately a competitive disadvantage. Such forces lead rational employers to offer competitive wages for the skills levels they require.

Minimum wages are also defended as an anti-poverty program, but this is a weak argument. A recent post at Coyote Blog explains “Why Minimum Wage Increases are a Terrible Anti-Poverty Program“. Among other points:

“Most minimum wage earners are not poor. The vast majority of minimum wage jobs are held as second jobs or held by second earners in a household or by the kids of affluent households. …

Most people in poverty don’t make the minimum wage. In fact, the typically [sic] hourly income of the poor appears to be around $14 an hour. The problem is not the hourly rate, the problem is the availability of work. The poor are poor because they don’t get enough job hours. …

Many young workers or poor workers with a spotty work record need to build a reliable work history to get better work in the future…. Further, many folks without much experience in the job market are missing critical skills — by these I am not talking about sophisticated things like CNC machine tool programming. I am referring to prosaic skills you likely take for granted (check your privilege!) such as showing up reliably each day for work, overcoming the typical frictions of working with diverse teammates, and working to achieve management-set goals via a defined process.”

Some of the same issues are highlighted by the Show-Me Institute, a Missouri think tank, in “Minimum Wage Increases Not Effective at Fighting Poverty“.

A higher minimum wage is one of those proposals that “sound good” to the progressive mind, but are counter-productive in the extreme. The cities of St. Louis and Kansas City would do well to avoid market manipulation that is likely to backfire.