Tags

Alex Nowraste, Amber Duke, Anti-Defamation League, Antifa, Assassination Culture, Black Lives Matter, Brian Thompson, Charlie Kirk, Christopher Rufo, David Harsanyi, George Floyd, Global Terrorism Database, ideological Violence, Islamic Violence, Leftwing Violence, Luigi Mangione, Matt Margolis, National Institute of Justice, Network Contagion Research Institute, Oklahoma City Bombing, Pulse Nightclub, Rightwing Violence, Ryan James Girdusky, The CATO Institute, Timothy McVeigh, Twin Towers Attack, Waukesha, Zizians

There have been many claims about the relative frequency of violent terrorist acts committed by the left and right sides of the political spectrum. Leftists like to focus on fatalities because they believe the data favor them as less violent. Broader measures of violence tell a different story. However, the comparisons are terribly flawed owing to the difficulty of 1) knowing that ideology was definitely the motive in a particular case; and 2) classifying the ideology of a violent actor. Law enforcement statistics are obviously subject to those kinds of classification problems, and so are most studies that purport to measure ideological violence accurately. In short, the comparisons are a mess.

Ideological Homicide

The following are counts of total ideologically-motivated homicides since 1990 according to a 2024 DOJ National Institute of Justice report. Excluding the 9/11/2021 Twin Towers attack, there were 520 total fatalities; 227 were attributed to the far right and 42 to the far left. That report is now available only as an archive on the Wayback Machine. The on-line PDF disappeared just after Charlie Kirk’s murder in September, which seems a little too coincidental. Nevertheless, as we’ll see, the report’s findings were absurd.

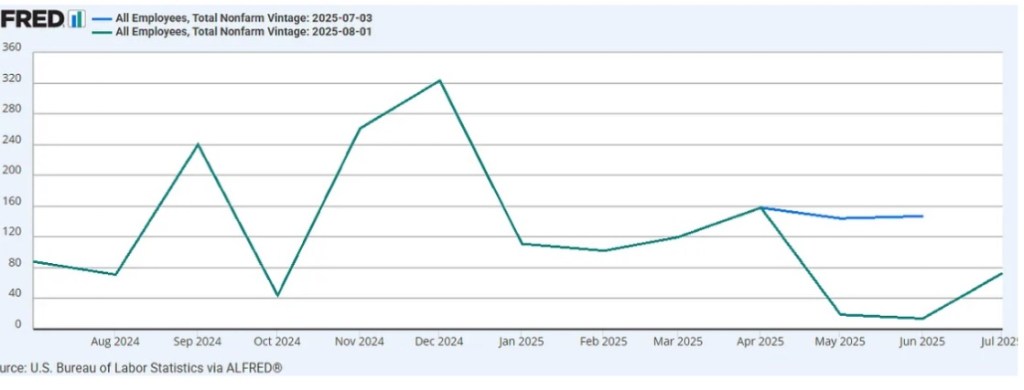

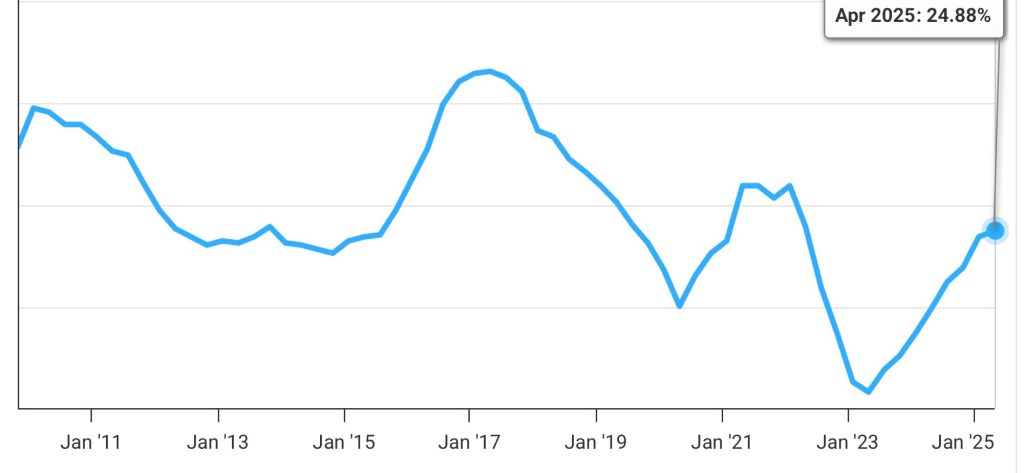

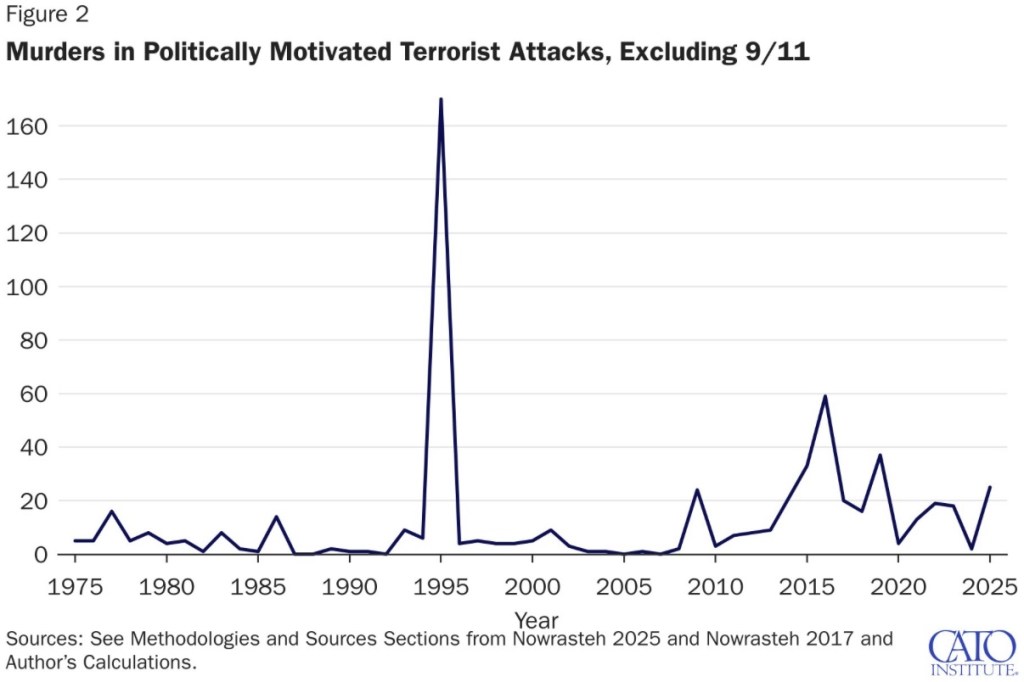

Matt Margolis reviews a recent CATO study by Alex Nowrasteh on politically-motivated violence. Here are the totals by year:

Margolis discusses a couple of major (and questionable) decisions made by the author or his sources:

—The Oklahoma City bombing in 1995 (168 deaths) was committed by Timothy McVeigh, an individual of ambiguous “anti-government” political persuasion who supported abortion rights. Those deaths were attributed to the right.

—The 2020 riots following George Floyd’s death resulted in 19 deaths. Of course, Antifa (which we’re confidently told doesn’t exist) and Black Lives Matter (BLM) were heavily involved, so this was clearly leftist violence. Those deaths aren’t counted,

These two adjustments alone would swing the attribution of deaths to a majority of leftist killers. Margolis then credits Amber Duke for identifying several additional misclassifications that occurred between 2015 and 9/10/2025 (the day prior to Kirk’s murder), during which there were 57 “politically-inspired” killers. She documents nine cases (26 fatalities and a number of serious injuries) of questionable political attribution. Several of these cases involved motives that are arguably nonpolitical, including severe psychological disorders, and at least one killer could have been motivated by a desire to promote a leftist politician (Tim Walz). I would probably accept a couple of these incidents as right-adjacent if not right-wing motivated, but the point is that ambiguities frequently compromise these ideological classifications.

Duke notes the head-scratching exclusion of a couple of incidents attributable to leftist passions: the BLM-affiliated Waukesha killer who plowed into a Christmas parade in his truck, killing six; a killing perpetrated by multiple BLM protestors; and a bomber at an IVF clinic that killed one person. Again, in the nine cases identified by Duke, the perps were either questionably classified ideologically or not classified at all. Correcting all of these errors swings the tally to 20 left-wing and 19 right-wing killers from 2015-9/10/2025.

Oddly, Duke takes no issue with the non-classification of the Pulse Nightclub shooting in 2016 (treated separately as Islamic terror). The killer was said to have had “issues” with gays, but apparently he was gay! And there were reports that he was motivated by opposition to U.S. foreign policy, which usually codes as left.

The ADL Weighs In

Duke also directs us to a critique by Ryan James Girdusky of some agitprop produced by the Anti-Defamation League (ADL). Of course, the ADL has a left-wing bias that comes through loud and clear in this report. As Duke summarizes,

“… they lump white nationalist inter-gang killings, domestic violence, and other non-ideologically motivated murders into its ‘right-wing political violence’ category.“

And here is David Harsanyi:

“The [ADL] list includes murders that occurred during attempted prison escapes, sex crimes, robberies, and family squabbles, none of which have anything to do with furthering the tenets of white supremacy or any cause. In one of the incidents, the police have yet to find a motive for the homicide.“

In case you still harbor any doubt about the ADL’s bias, their report actually excludes six deaths connected with the Zizians, a murderous trans cult. They also ascribe no political motive for Luigi Mangioni’s assassination of United Health Care CEO Brian Thompson because:

“… hostility towards the healthcare system or health insurance companies is not in itself an ideology and because a good portion of the anger on Mangione’s part may have stemmed from purely personal reasons .…”

The list, however, includes “right-wing” murders that occurred during attempted prison escapes, sex crimes, robberies, and family squabbles, none of which had anything to do with furthering the tenets of white supremacy or any cause. In one of these incidents, the police have yet to find a motive for the homicide.

Harsanyi offers further criticism of the FBI’s classifications and the Global Terrorism Database. Of the latter, he notes:

“It counted the Las Vegas mass shooter who murdered 59 people in 2017 as a right-wing ‘anti-government extremist.’ In truth, we have no clue what the shooter’s motivations were, unless the GTD has inside information from the FBI. Of the 32 other incidents the organization labeled right-wing terrorism that year, 12 were merely ‘suspected’ of being on the Right (mostly because they had white skin).“

More Systemic Misclassification

The CATO and ADL reports, as well as government statistics, are uniform in treating violence by Islamic extremists as a category apart from violence on the left and right. The Islamist category dominates the data on terrorist homicides due to 9/11 (87% of terrorist fatalities since 1975; excluding 9/11, Islamist attacks account for 23%). Separate treatment is based on alleged religious motives behind these acts. However, Islamic causes have garnered increasing support from the Left in the years since 9/11 and the War in Iraq. That became more palpable during the Obama years. It has culminated in a surge of aggressive anti-Zionism and support for not just a Palestinian state, but one extending from the river to the sea, which is code for genocide in Israel. Apparently, Hamas’ murderous raid into Israel on 10/7/2023 was a major touch point for this activism.

At present, there isn’t much ambiguity surrounding the leftist-Islamic alignment, despite what should seem like obvious points of tension. These include Islamic subjugation of women and harsh treatment of homosexuals. But in the West, leftists identify with the presumed victimhood status of Islamic populations. Certain cases of violence by Islamic actors in the U.S. can reasonably be counted now as leftist terror attacks. However, the reports aren’t tallied that way, which helps to foster the impression that the right instigates a larger share of violent and homicidal attacks.

Also problematic: “anti-government” actors have almost always been classified as right-wing. This is highly misleading. Both left-and right-wing anti-government violence tend to vary with which side is in power.

Non-Lethal But Could Be Lethal

Despite its severe shortcomings, the CATO report at least gives lip service to non-lethal violence … or what, for the grace of God, might have turned out to be non-lethal. This encompasses foiled efforts to harm, injuries of all sorts, arson, smashed windows, stolen merchandise, other property damage, and even threats to individuals or institutions, which tend to inflict emotional distress and other costs. Too much commentary hints at praise for the left’s “restraint” in perpetrating non-lethal violence! Protests accompanied by riots are described as “mostly peaceful”.

There is no question about the recent surge in left-wing violence, especially in 2025. Over the past few years, there have been several assassination attempts against high-profile individuals on the right, including Donald Trump and Charlie Kirk. Trans activists have perpetrated other killings. There have been multiple attacks on ICE agents and other law enforcement officers. We’ve witnessed persecution, intimidation, and attacks against Jews on college campuses and elsewhere. Riots have erupted in Portland, LA, New York, Atlanta, and other cities. The trend is not new, but the levels of unrest have been disquieting.

They Say, “Don’t Overreact”

Another factor is prosecutorial leniency. Violent leftist actions tend to be concentrated in urban areas where prosecutors are likely to share the actors’ ideology and give them a pass. This does nothing to discourage destructive behavior. As a civil libertarian, however, Nowrasteh warns in his CATO report:

“The big fear from politically motivated terrorism is that the pursuit of justice will overreach, result in new laws and policies that overreact to the small threat, and end up killing far more people while diminishing all our freedoms.“

I too have strong libertarian leanings, but the balance of risks should favor action to protect individuals and their property from threats of violent action, maintain public order, while not prejudging the intent of disturbances as “peaceful”.

Views on Violence

Official statistics and other reports on political violence by the left and right are unreliable. They tend to overstate right-wing inspired violence and understate left-wing inspired violence. The recent swing toward leftist acts of terror has been difficult to hide, however.

I’ll close by noting that the mainstream right and left seem to have considerably different attitudes toward politically-motivated violence. In my observation, when some fringe right-wing maniac, white supremacist, or militia group perpetrates violence (or so much as stages a public demonstration), the mainstream right tends to react with revulsion. When fringe leftists do the same, the mainstream left tends to rationalize and even support the uglies.

The Network Contagion Research Institute (NCRI) has noted the rise of “assassination culture”. Surveys show that violence against political opposition has more support from the left than the right. Social media has become a breeding ground upon which these sentiments can turn into action. From the last link:

“NCRI’s analysis, based on troves of social media data, reveals how fringe internet culture has helped build what the group calls ‘permission structures’ for violence. These are social environments—online or offline—where violent acts are no longer condemned but tacitly accepted, if not outright encouraged.“

This is what Christopher Rufo calls the “left-wing terror memeplex”, and it’s often less tacit than right out loud! It’s almost enough to make a sham of the explicit exceptions to protected speech defined by the First Amendment.